Exam Details

Exam Code

:CKSExam Name

:Linux Foundation Certified Kubernetes Security Specialist (CKS)Certification

:Linux Foundation CertificationsVendor

:Linux FoundationTotal Questions

:46 Q&AsLast Updated

:Jun 09, 2025

Linux Foundation Linux Foundation Certifications CKS Questions & Answers

-

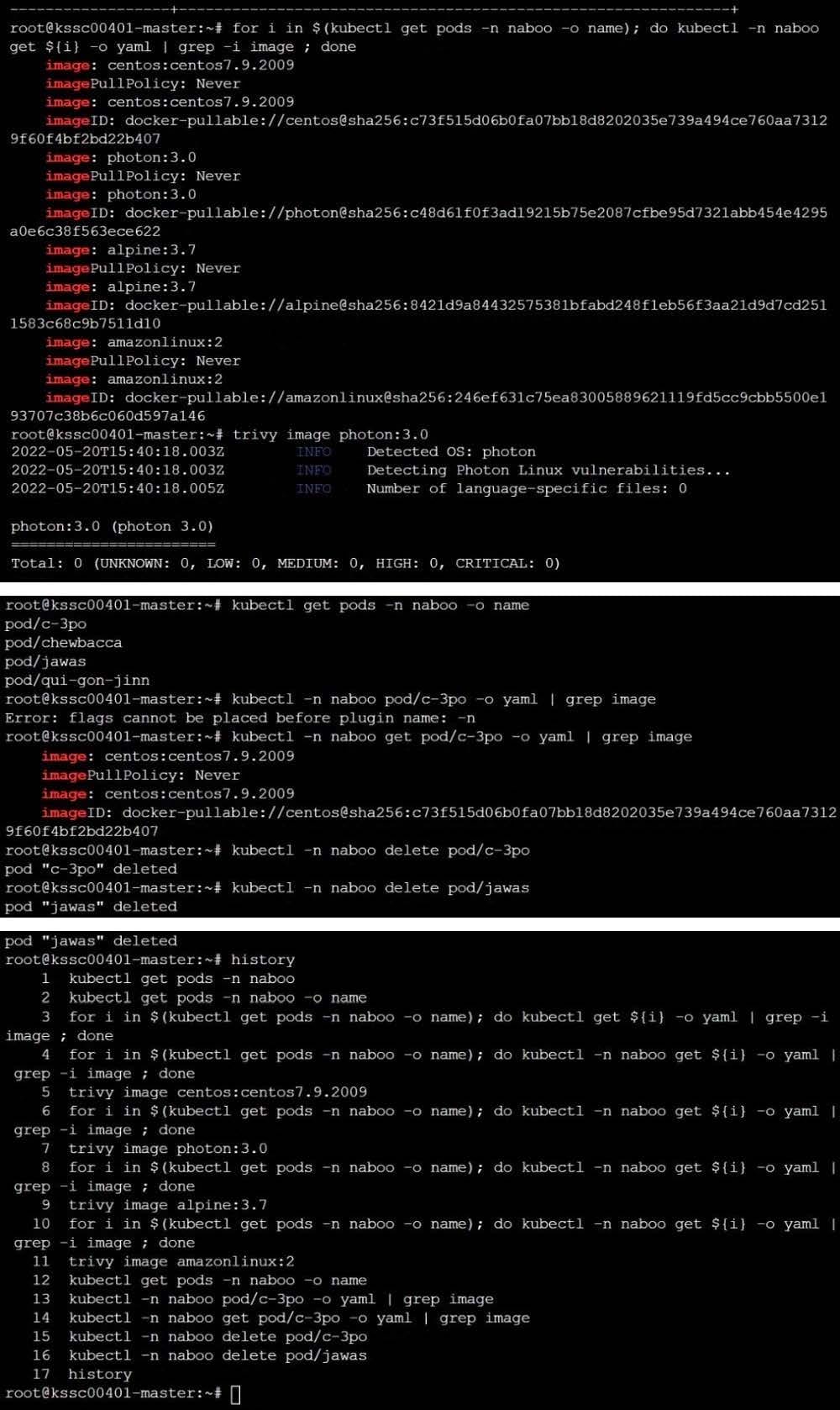

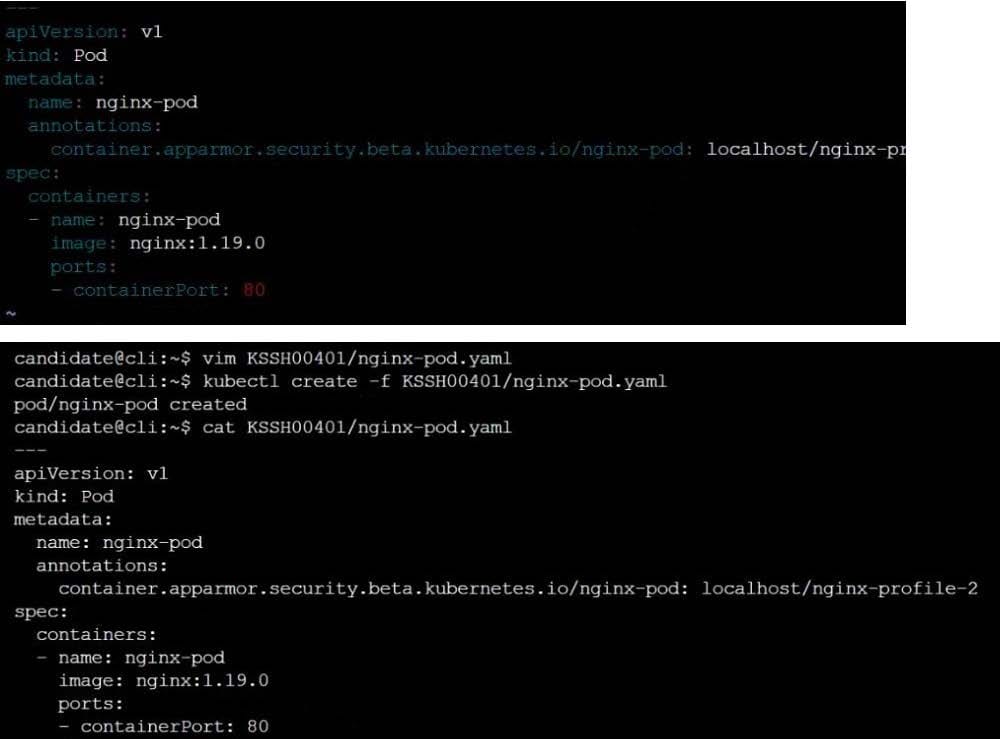

Question 21:

AppArmor is enabled on the cluster's worker node. An AppArmor profile is prepared, but not enforced yet.

Task

On the cluster's worker node, enforce the prepared AppArmor profile located at /etc/apparmor.d/nginx_apparmor.

Edit the prepared manifest file located at /home/candidate/KSSH00401/nginx-pod.yaml to apply the AppArmor profile.

Finally, apply the manifest file and create the Pod specified in it.

A. See the explanation below

B. PlaceHolder

-

Question 22:

You can switch the cluster/configuration context using the following command:

[desk@cli] $ kubectl config use-context test-account

Task: Enable audit logs in the cluster.

To do so, enable the log backend, and ensure that:

1.

logs are stored at /var/log/Kubernetes/logs.txt

2.

log files are retained for 5 days

3.

at maximum, a number of 10 old audit log files are retained

A basic policy is provided at /etc/Kubernetes/logpolicy/audit-policy.yaml. It only specifies what not to log.

Note: The base policy is located on the cluster's master node.

Edit and extend the basic policy to log:

1.

Nodes changes at RequestResponse level

2.

The request body of persistentvolumes changes in the namespace frontend

3.

ConfigMap and Secret changes in all namespaces at the Metadata level

Also, add a catch-all rule to log all other requests at the Metadata level Note: Don't forget to apply the modified policy.

A. See the explanation below

B. PlaceHolder

-

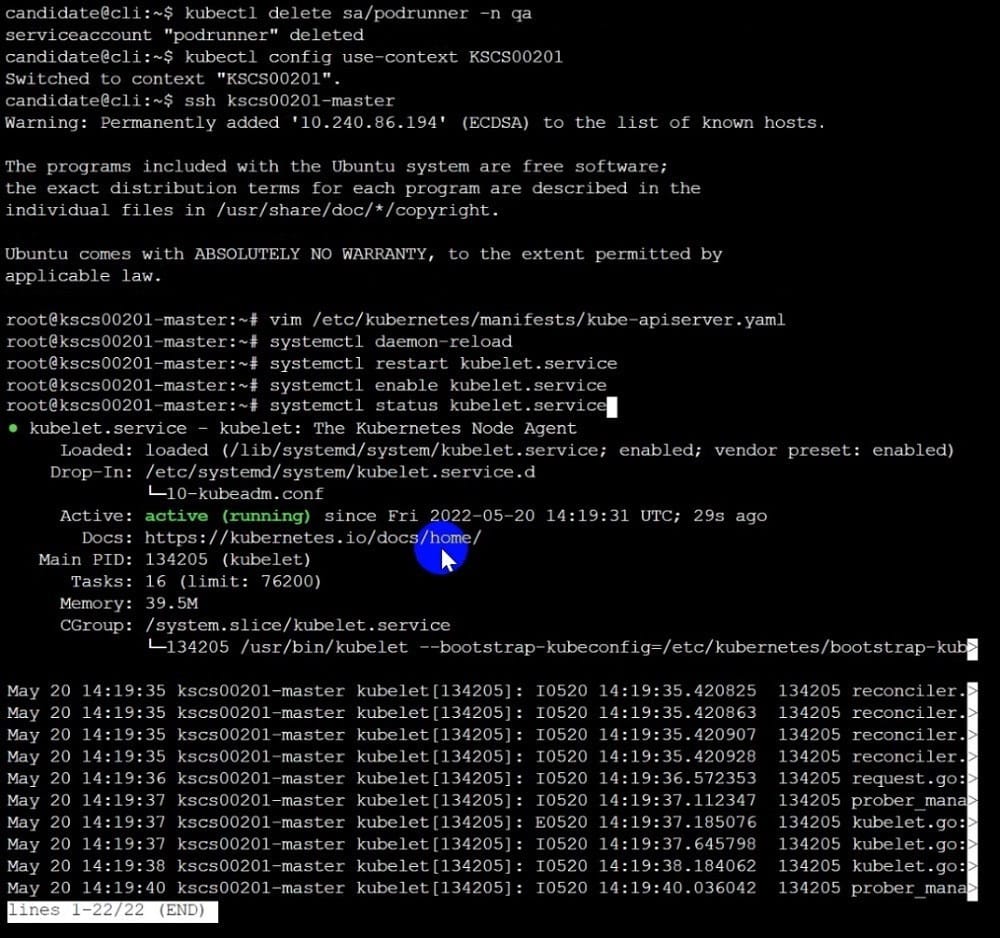

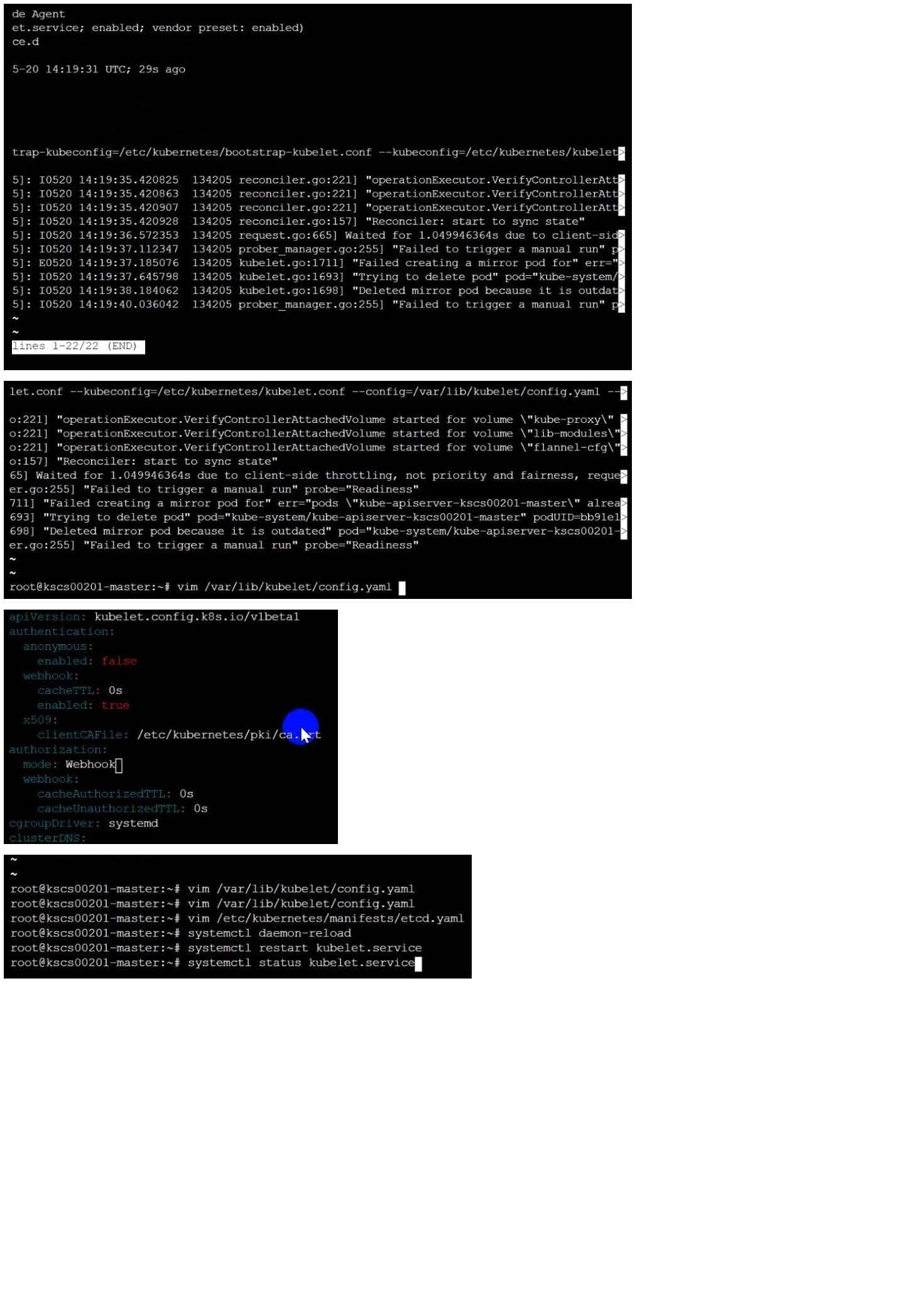

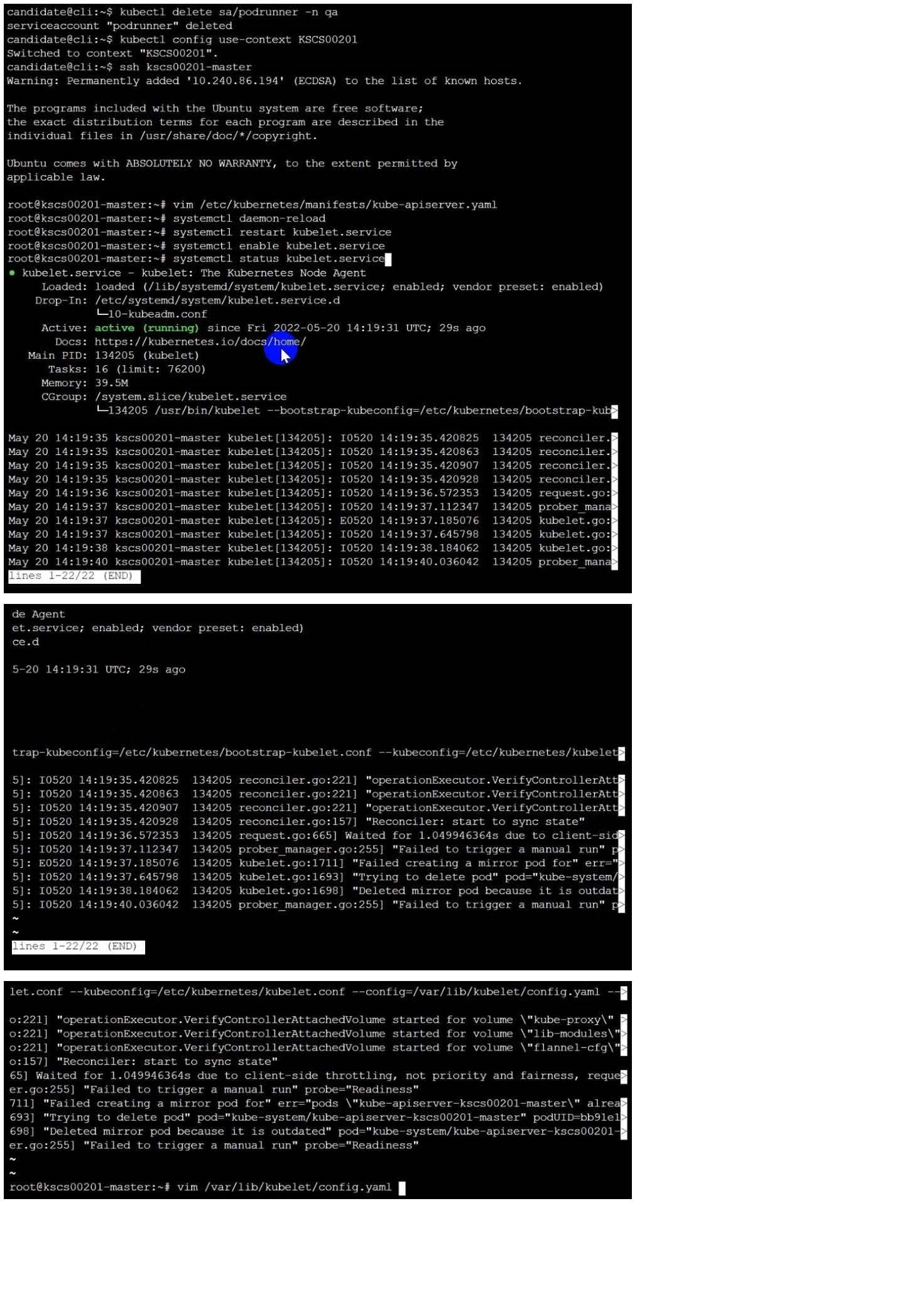

Question 23:

A CIS Benchmark tool was run against the kubeadm-created cluster and found multiple issues that must be addressed immediately.

Fix all issues via configuration and restart the affected components to ensure the new settings take effect. Fix all of the following violations that were found against the API server:

Fix all of the following violations that were found against the Kubelet: Fix all of the following violations that were found against etcd:

A. See explanation below.

B. PlaceHolder

-

Question 24:

Service is running on port 389 inside the system, find the process-id of the process, and stores the names of all the open-files inside the /candidate/KH77539/files.txt, and also delete the binary.

A. See explanation below.

B. PlaceHolder

-

Question 25:

CORRECT TEXT

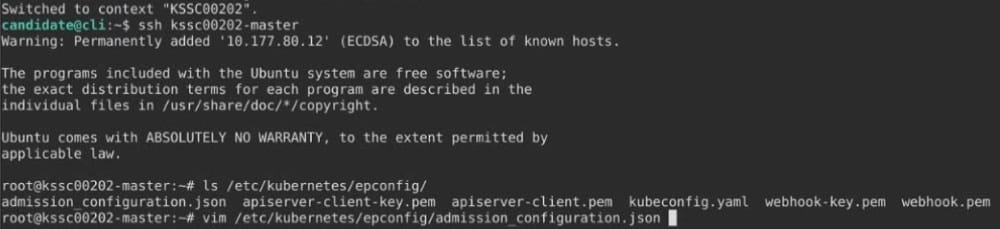

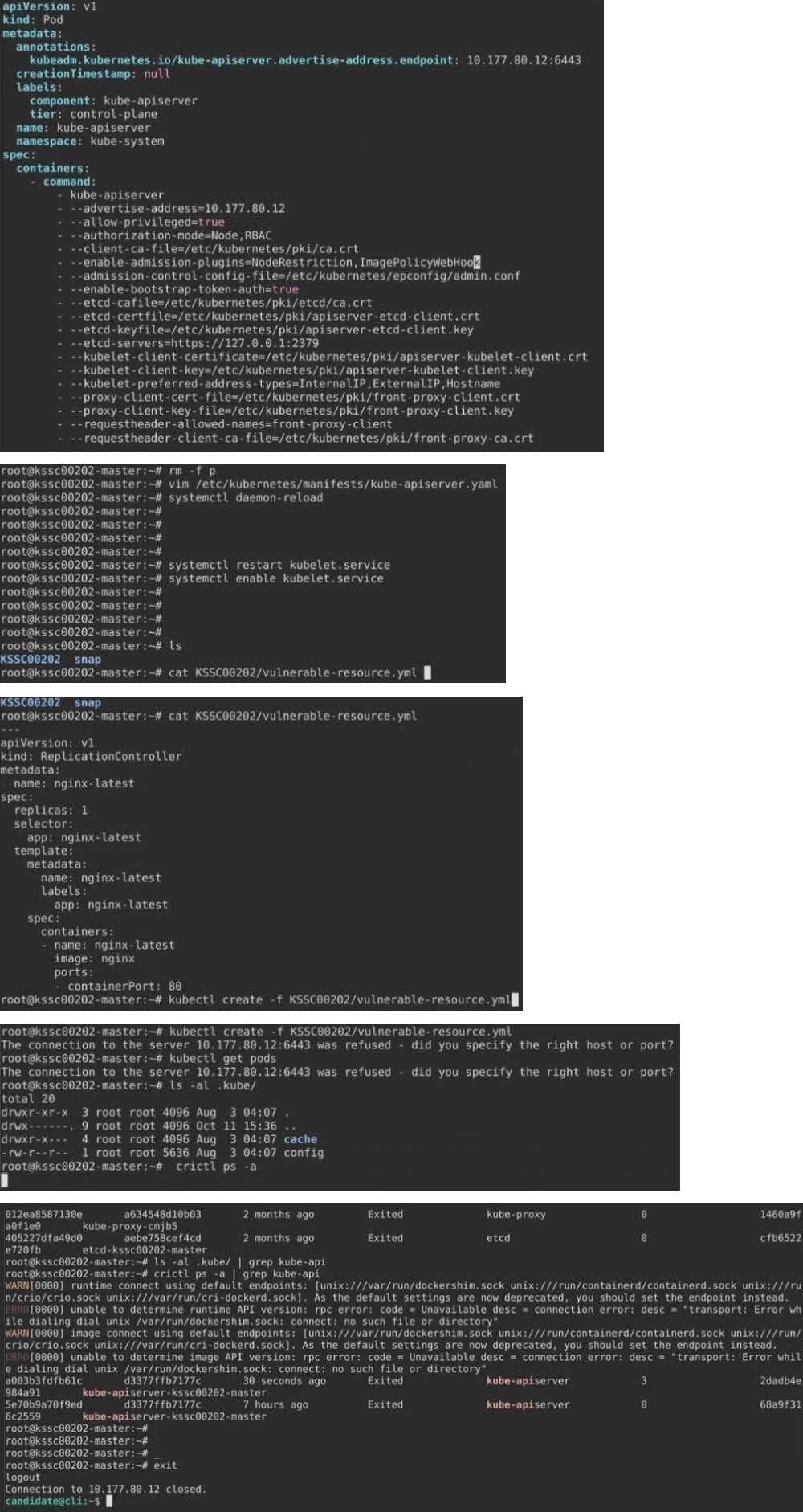

A container image scanner is set up on the cluster, but it's not yet fully integrated into the cluster s configuration. When complete, the container image scanner shall scan for and reject the use of vulnerable images.

Given an incomplete configuration in directory /etc/kubernetes/epconfig and a functional container image scanner with HTTPS endpoint https://wakanda.local:8081 /image_policy:

1.

Enable the necessary plugins to create an image policy

2.

Validate the control configuration and change it to an implicit deny

3.

Edit the configuration to point to the provided HTTPS endpoint correctly

Finally, test if the configuration is working by trying to deploy the vulnerable resource /root/KSSC00202/vulnerable-resource.yml.

A. See the explanation below

B. PlaceHolder

-

Question 26:

Cluster: dev Master node: master1 Worker node: worker1 You can switch the cluster/configuration context using the following command: [desk@cli] $ kubectl config use-context dev Task:

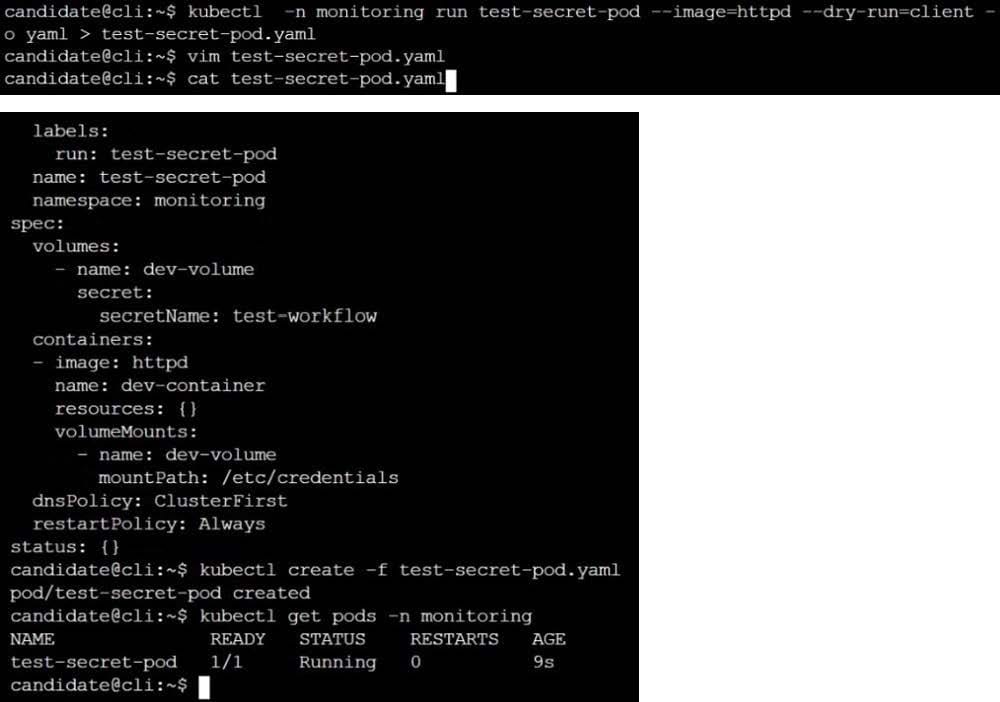

Retrieve the content of the existing secret named adam in the safe namespace.

Store the username field in a file names /home/cert-masters/username.txt, and the password field in a file named /home/cert-masters/password.txt.

1.

You must create both files; they don't exist yet.

2.

Do not use/modify the created files in the following steps, create new temporary files if needed.

Create a new secret names newsecret in the safe namespace, with the following content:

Username: dbadmin Password: moresecurepas

Finally, create a new Pod that has access to the secret newsecret via a volume:

Namespace:safe Pod name:mysecret-pod Container name:db-container Image:redis Volume name:secret-vol Mount path:/etc/mysecret

A. See the explanation below

B. PlaceHolder

-

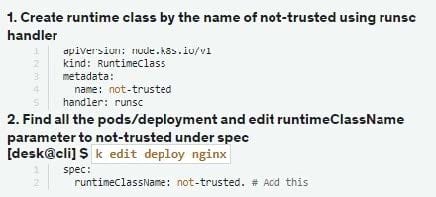

Question 27:

Context:

Cluster: gvisor

Master node: master1

Worker node: worker1

You can switch the cluster/configuration context using the following command:

[desk@cli] $ kubectl config use-context gvisor

Context: This cluster has been prepared to support runtime handler, runsc as well as traditional one.

Task:

Create a RuntimeClass named not-trusted using the prepared runtime handler names runsc.

Update all Pods in the namespace server to run on newruntime.

A. See the explanation below

B. PlaceHolder

-

Question 28:

Fix all issues via configuration and restart the affected components to ensure the new setting takes effect.

Fix all of the following violations that were found against the API server:

1.

Ensure the --authorization-mode argument includes RBAC

2.

Ensure the --authorization-mode argument includes Node

3.

Ensure that the --profiling argument is set to false

Fix all of the following violations that were found against the Kubelet:

1.

Ensure the --anonymous-auth argument is set to false.

2.

Ensure that the --authorization-mode argument is set to Webhook. Fix all of the following violations that were found against the ETCD:

Ensure that the --auto-tls argument is not set to true Hint: Take the use of Tool Kube-Bench

A. See the below.

B. PlaceHolder

-

Question 29:

You can switch the cluster/configuration context using the following command:

[desk@cli] $ kubectl config use-context qa

Context:

A pod fails to run because of an incorrectly specified ServiceAccount

Task:

Create a new service account named backend-qa in an existing namespace qa, which must not have access to any secret.

Edit the frontend pod yaml to use backend-qa service account

Note: You can find the frontend pod yaml at /home/cert_masters/frontend-pod.yaml

A. See the explanation below

B. PlaceHolder

-

Question 30:

CORRECT TEXT Your organization's security policy includes:

1.

ServiceAccounts must not automount API credentials

2.

ServiceAccount names must end in "-sa"

The Pod specified in the manifest file /home/candidate/KSCH00301 /pod-m

nifest.yaml fails to schedule because of an incorrectly specified ServiceAccount.

Complete the following tasks:

Task

1.

Create a new ServiceAccount named frontend-sa in the existing namespace qa. Ensure the ServiceAccount does not automount API credentials.

2.

Using the manifest file at /home/candidate/KSCH00301 /pod-manifest.yaml, create the Pod.

3.

Finally, clean up any unused ServiceAccounts in namespace qa.

A. See the explanation below

B. PlaceHolder

Related Exams:

CKA

Linux Foundation Certified Kubernetes Administrator (CKA)CKAD

Linux Foundation Certified Kubernetes Application Developer (CKAD)CKS

Linux Foundation Certified Kubernetes Security Specialist (CKS)HFCP

Linux Foundation Certified Hyperledger Fabric Certified Practitioner (HFCP)KCNA

Linux Foundation Certified Kubernetes and Cloud Native Associate (KCNA)LFCA

Linux Foundation Certified IT Associate (LFCA)LFCS

Linux Foundation Certified System Administrator (LFCS)

Tips on How to Prepare for the Exams

Nowadays, the certification exams become more and more important and required by more and more enterprises when applying for a job. But how to prepare for the exam effectively? How to prepare for the exam in a short time with less efforts? How to get a ideal result and how to find the most reliable resources? Here on Vcedump.com, you will find all the answers. Vcedump.com provide not only Linux Foundation exam questions, answers and explanations but also complete assistance on your exam preparation and certification application. If you are confused on your CKS exam preparations and Linux Foundation certification application, do not hesitate to visit our Vcedump.com to find your solutions here.