Exam Details

Exam Code

:HDPCDExam Name

:Hortonworks Data Platform Certified DeveloperCertification

:Hortonworks CertificationsVendor

:HortonworksTotal Questions

:60 Q&AsLast Updated

:Jul 11, 2025

Hortonworks Hortonworks Certifications HDPCD Questions & Answers

-

Question 41:

You have user profile records in your OLPT database, that you want to join with web logs you have already ingested into the Hadoop file system. How will you obtain these user records?

A. HDFS command

B. Pig LOAD command

C. Sqoop import

D. Hive LOAD DATA command

E. Ingest with Flume agents

F. Ingest with Hadoop Streaming

-

Question 42:

You use the hadoop fs –put command to write a 300 MB file using and HDFS block size of 64 MB. Just after this command has finished writing 200 MB of this file, what would another user see when trying to access this life?

A. They would see Hadoop throw an ConcurrentFileAccessException when they try to access this file.

B. They would see the current state of the file, up to the last bit written by the command.

C. They would see the current of the file through the last completed block.

D. They would see no content until the whole file written and closed.

-

Question 43:

What is the disadvantage of using multiple reducers with the default HashPartitioner and distributing your workload across you cluster?

A. You will not be able to compress the intermediate data.

B. You will longer be able to take advantage of a Combiner.

C. By using multiple reducers with the default HashPartitioner, output files may not be in globally sorted order.

D. There are no concerns with this approach. It is always advisable to use multiple reduces.

-

Question 44:

You need to move a file titled "weblogs" into HDFS. When you try to copy the file, you can't. You know you have ample space on your DataNodes. Which action should you take to relieve this situation and store more files in HDFS?

A. Increase the block size on all current files in HDFS.

B. Increase the block size on your remaining files.

C. Decrease the block size on your remaining files.

D. Increase the amount of memory for the NameNode.

E. Increase the number of disks (or size) for the NameNode.

F. Decrease the block size on all current files in HDFS.

-

Question 45:

What does the following WebHDFS command do?

Curl -1 -L "http://host:port/webhdfs/v1/foo/bar?op=OPEN"

A. Make a directory /foo/bar

B. Read a file /foo/bar

C. List a directory /foo

D. Delete a directory /foo/bar

-

Question 46:

In a MapReduce job, the reducer receives all values associated with same key. Which statement best describes the ordering of these values?

A. The values are in sorted order.

B. The values are arbitrarily ordered, and the ordering may vary from run to run of the same MapReduce job.

C. The values are arbitrary ordered, but multiple runs of the same MapReduce job will always have the same ordering.

D. Since the values come from mapper outputs, the reducers will receive contiguous sections of sorted values.

-

Question 47:

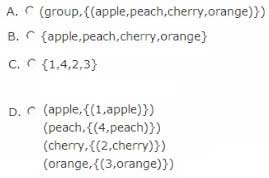

Consider the following two relations, A and B.

What is the output of the following Pig commands?

X = GROUP A BY S1;

DUMP X;

A. Option A

B. Option B

C. Option C

D. Option D

-

Question 48:

Your client application submits a MapReduce job to your Hadoop cluster. Identify the Hadoop daemon on which the Hadoop framework will look for an available slot schedule a MapReduce operation.

A. TaskTracker

B. NameNode

C. DataNode

D. JobTracker

E. Secondary NameNode

-

Question 49:

What does the following command do?

register andapos;/piggyban):/pig-files.jarandapos;;

A. Invokes the user-defined functions contained in the jar file

B. Assigns a name to a user-defined function or streaming command

C. Transforms Pig user-defined functions into a format that Hive can accept

D. Specifies the location of the JAR file containing the user-defined functions

-

Question 50:

Indentify which best defines a SequenceFile?

A. A SequenceFile contains a binary encoding of an arbitrary number of homogeneous Writable objects

B. A SequenceFile contains a binary encoding of an arbitrary number of heterogeneous Writable objects

C. A SequenceFile contains a binary encoding of an arbitrary number of WritableComparable objects, in sorted order.

D. A SequenceFile contains a binary encoding of an arbitrary number key-value pairs. Each key must be the same type. Each value must be the same type.

Related Exams:

Tips on How to Prepare for the Exams

Nowadays, the certification exams become more and more important and required by more and more enterprises when applying for a job. But how to prepare for the exam effectively? How to prepare for the exam in a short time with less efforts? How to get a ideal result and how to find the most reliable resources? Here on Vcedump.com, you will find all the answers. Vcedump.com provide not only Hortonworks exam questions, answers and explanations but also complete assistance on your exam preparation and certification application. If you are confused on your HDPCD exam preparations and Hortonworks certification application, do not hesitate to visit our Vcedump.com to find your solutions here.